TU Wien:Logic and Computability VU (Ciabattoni)/Oral Exam Questions 2023WS

Logic and Computability[Bearbeiten | Quelltext bearbeiten]

Note, we are extending the solutions of the SS22 questions. Please collaborate...

Ciabattoni[Bearbeiten | Quelltext bearbeiten]

Classical Logic[Bearbeiten | Quelltext bearbeiten]

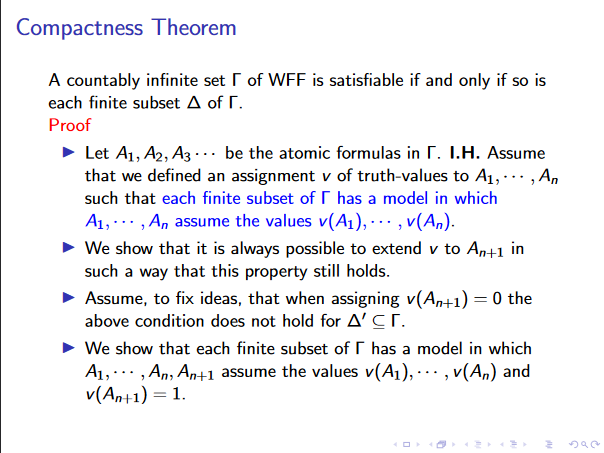

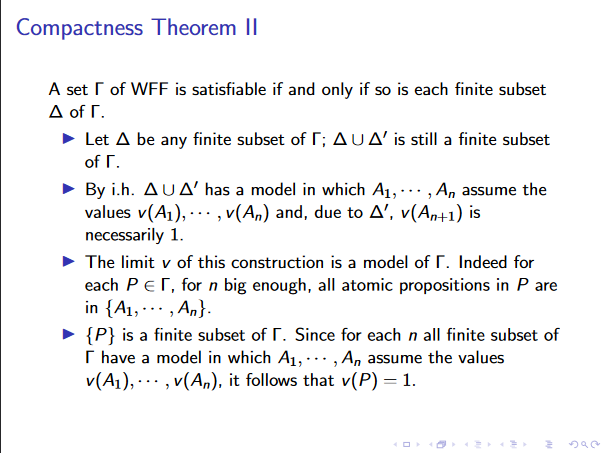

Compactness Theorem[Bearbeiten | Quelltext bearbeiten]

1. The direction "If a countably infinite set of formulas SAT, then so is every finite subset " is trivial.

2. The direction "If every finite subset is SAT, then so is the complete set " is more involved and quite non-trivial. Consider a more detailed explanation e.g., https://www.cs.ox.ac.uk/people/james.worrell/lec8-2015.pdf

In general, the non-trivialness stems from the problem of how to prove something infinite with just finite things, where induction is, in general, not applicable. But, due to an intelligent way of choosing the induction hypothesis (for an arbitrarily large number n of propositional variables/atoms, we are able to prove that we have an infinite amount of satisfying models. From this, one can argue that in the limit, we still have an infinite amount of satisfying models, which, although it seems a bit hand-wavy, is the actual proof. See also the completeness theorem (not to be confused with the incompleteness theorem): Wikipedia-Completeness

Soundness and Completeness of the Propositional Sequent Salculus[Bearbeiten | Quelltext bearbeiten]

- Soundness:

- We want to check that Axiom is valid in classical logic and the inference rules of preserve validity.

- Axiom is valid since is trivially valid. Structural rules (Weakening, Contraction; Permutation) also trivial.

- For logical rules investigate interpretation s.t. all take value and all in value , other cases are trivial.

- Completeness:

- We can show that the calculus rules preserve validity when applied bottom up (similarly to soundness proof). Additionally, when applying rules bottom up the number of connectives will decrease and thus in a finite amount of steps we will reach sequents only containing atomic rules. If a formula , then can be derived from using weakening rules.

Completeness of the Sequent Calculus for first-order Classical Logic[Bearbeiten | Quelltext bearbeiten]

- We need to show that implies is provable in LK'. That is, we show that we will either find a proof or can savely conclude that .

- We use the proposed proof search algorithm, which is a bottom up procedure (applying the sequents bottom-up and alternating between left and right rules).

- We further ensure that all variables introduced in and do not appear free in the conclusion and for and all terms in the domain are eventually considered (e.g. to find the possibly single term that satisfies ).

- If our algorithm finds a proof we are done, since we have shown that the statement can be proved in our calculus.

- otherwise, we end up in two possible scenarios:

- one of the leaves is not an axiom

- Then we can define an interpretation based on the contained atomic formulas. That is, if the leaf is , we set and , thus finding an interpreation that falsifies the initial statement.

- there is an infinite branch:

- Since the branch is infinite, there cannot be an atomic formula that appears on the left-hand side and on the right-hand side of the sequent. Otherwise we could reach an axiom by weakening and would have thus found a proof -> contradicting the inifity of this branch.

- Now, the key insight is, whenever holds, there must be a witness (making trivially true), meaning in some interpretation:

- In an infinite branch, our algorithm will eventually find that witness (and have it reduced to complexity 0 i.e. an atomic formula). Therefore, we would have found a proof, thus again implying .

- Only if , we will never find such a witness. Therefore, the proof search terminates on every positive instance () (*) and but might not terminate on a negative instance ().

- Given (*), LK' for FOL is complete.

- one of the leaves is not an axiom

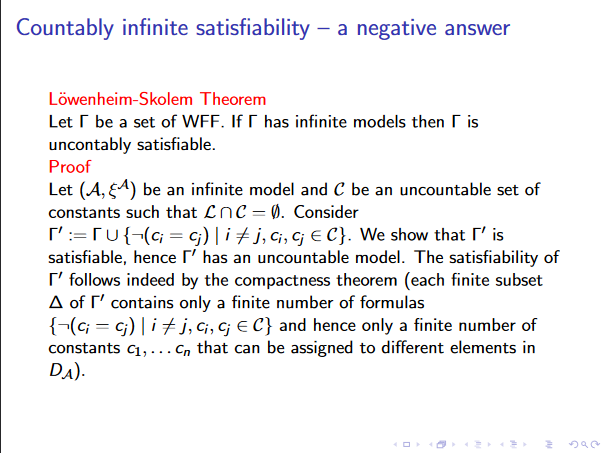

Löwenheim-Skolem Theorem[Bearbeiten | Quelltext bearbeiten]

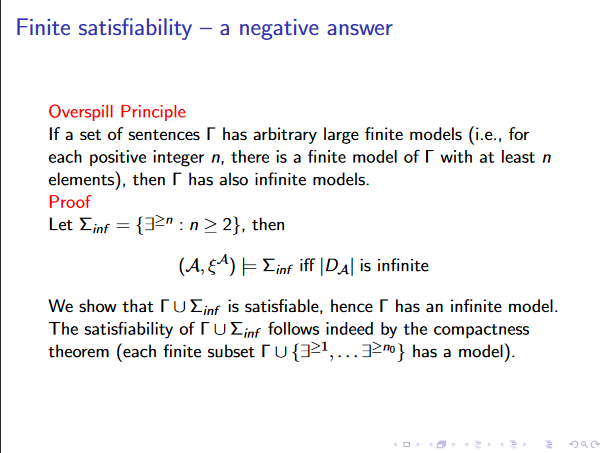

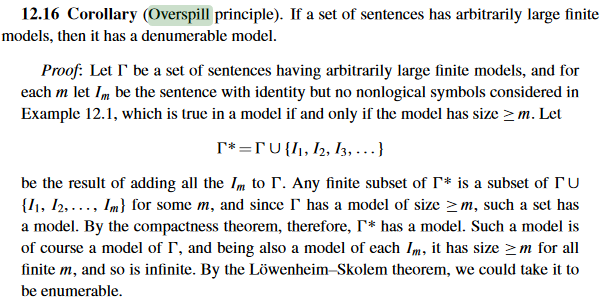

Overspill Lemma[Bearbeiten | Quelltext bearbeiten]

- where represents the formula

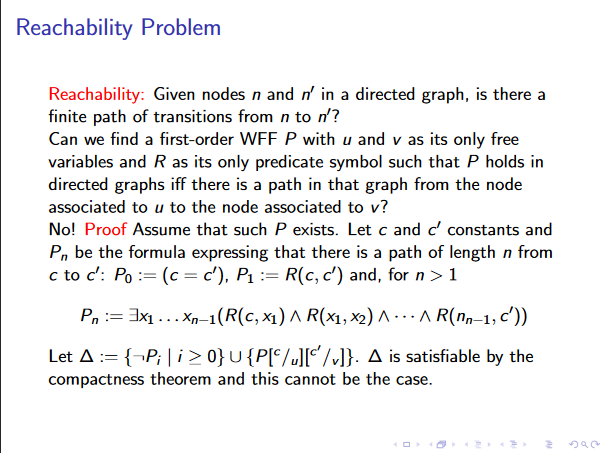

Reachability Problem (non-expressibility in first-order logic)[Bearbeiten | Quelltext bearbeiten]

- Although the above result might be unintuitive, it holds as we take strong enough assumptions (e.g., infinite graphs)

- The contradiction follows as we say both, reachable and unreachable, and this is satisfiable.

- As obviously, for each finite graph , one can construct a WFF that models reachability.

Computability Theory[Bearbeiten | Quelltext bearbeiten]

Fixed Point theorem in lambda calculus[Bearbeiten | Quelltext bearbeiten]

- Let , then we define the turing fixed point , which is defined as

- Every lambda term has a fixed point, i.e.,

Non Existence of an Algorithm for each (decision) Problem[Bearbeiten | Quelltext bearbeiten]

- Each decision problem can be seen as a function

- Each algorithm computes a function

- algorithms operate on various objects but we can encode them into

- is uncountable

- Corollary to diagonalization lemma

- Main idea: Construct a function which maps natural numbers to functions, then construct inverse function .

- There are only countably many algorithms

- There are algorithms

- Let be a formal system which describes algorithms

- Let be the set containing all algorithms in

- Each algorithm in computes a function

- Alphabet of has a finite number of symbols

- A bijection can now be built between and by ordering the algorithms first according to the number of symbols and then lexicographically

- There are algorithms

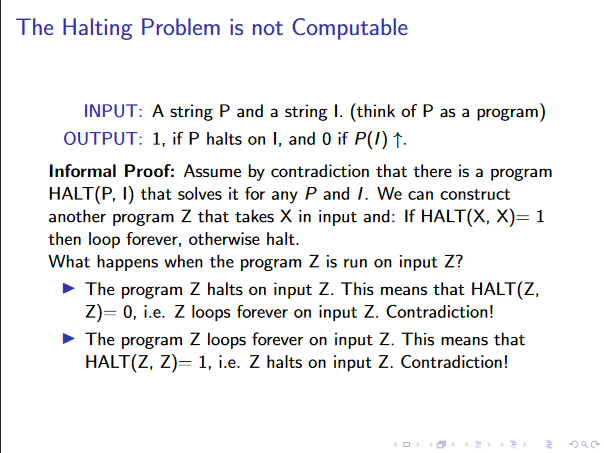

Undecidability of the Halting Problem[Bearbeiten | Quelltext bearbeiten]

- TODO - Add formal proof

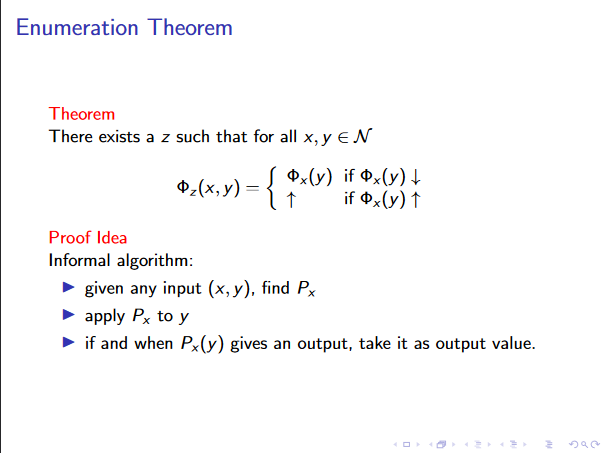

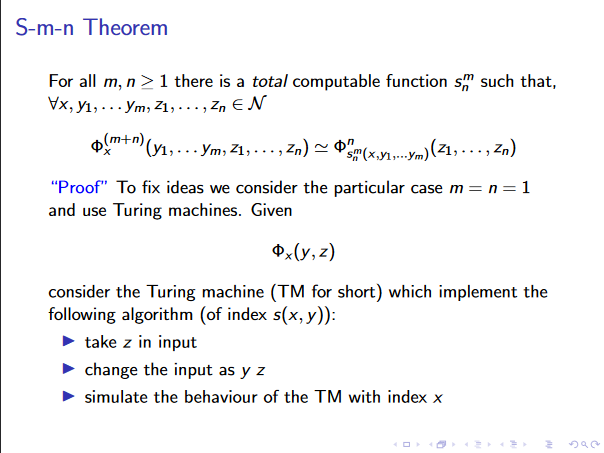

Enumeration and s-m-n Theorem (intuitive proofs)[Bearbeiten | Quelltext bearbeiten]

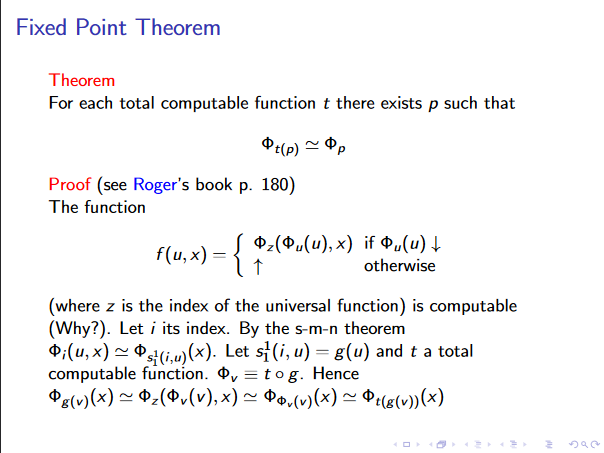

Fixed Point Theorem for computable Functions[Bearbeiten | Quelltext bearbeiten]

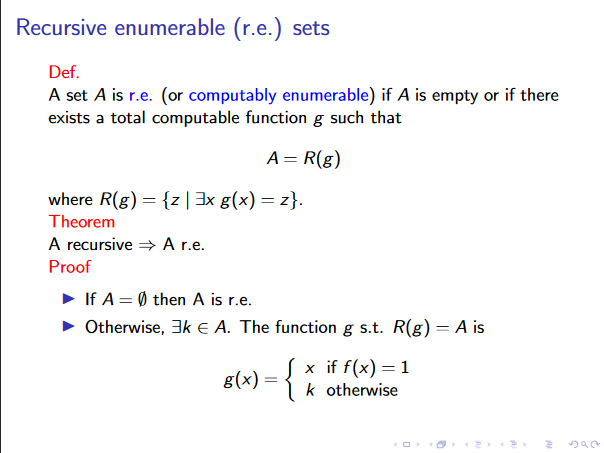

Each Recursive Set is R.E.[Bearbeiten | Quelltext bearbeiten]

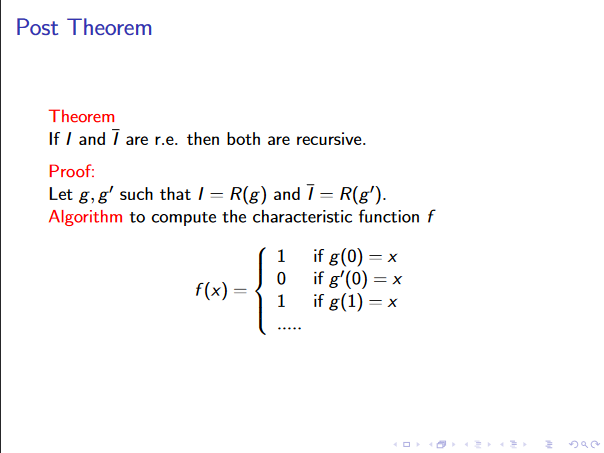

Post Theorem[Bearbeiten | Quelltext bearbeiten]

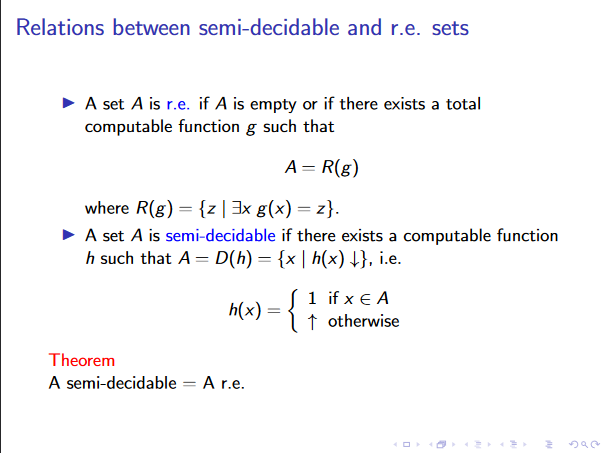

Equivalence between R.E. Sets and semi-decidable sets[Bearbeiten | Quelltext bearbeiten]

- I r.e. s.t. and s.t. is

- h(x) := …

- is g(0) = x?

- is g(1) = x?

- …

- h(x) := …

- I semi-decidable total computable s.t. , where .

- Let and s.t.

- if then is r.e. by definition

- otherwise there . Using dovetailing

- x if in n steps

- k otherwise

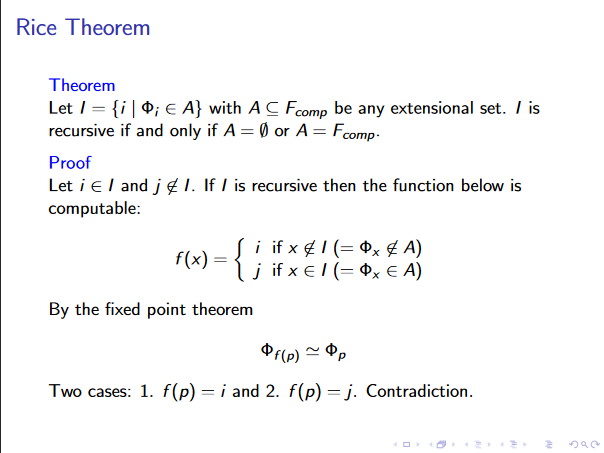

Rice Theorem[Bearbeiten | Quelltext bearbeiten]

Fermüller[Bearbeiten | Quelltext bearbeiten]

Automated Deduction[Bearbeiten | Quelltext bearbeiten]

Why is Herbrand’s Theorem important for automated deduction?[Bearbeiten | Quelltext bearbeiten]

- A universal prenex formula is unsatisfiable iff there exists a finite unsatisfiable set of instances of the quantifier-free .

- Allows for instantiation of FOL formulas, these can then be treated like propositional formulas.

- thus it provides a complexity reduction which is the base of automated deduction procedures

On what principles are state-of-the-art provers for first-order logic based?[Bearbeiten | Quelltext bearbeiten]

- unification: compute most general unifiers

- atomic cut: propositional resolution rule (cut atom and its negation)

Can there be a computable function that bounds the size of the search space of a first-order prover in terms of the size of the input (formulas of set of clauses)? (Explain!)[Bearbeiten | Quelltext bearbeiten]

- No:

- then we would have a decision procedure (finite search space) and we know that validity of first order formulas is only semi-decidable.

- if yes, we could limit the search space to a finite space which would make it decidable (i.e. FOL would no longer be undecidable)

What is the idea of structural clause forms? What can be gained (relative to traditional clause form translation) in terms of size of clause forms, minimal refutations, and search space, respectively?[Bearbeiten | Quelltext bearbeiten]

- Definitional translation (extended/FOL Tseitin): introduce new predicate for each subformula, validity preserving

- Gain: only constant number of clauses for each subformula (linear in the propositional case, quadratic with quantifiers ) (otherwise worst case exponential), sometimes smaller refutations, larger search space since more variables (but smaller formulas at the same time)

What does ‘most general unification’ mean? Why is it so important for automated deduction? How does it occur there? Can it be implemented efficiently?[Bearbeiten | Quelltext bearbeiten]

- Generality:

- We say is more general than if s.t. holds.

- A substitution is more general than if expressions , is more general than .

- is a mgu of if is a unifier of and is more or equally general than any other unifier of .

- Importance:

- When searching for a refutation, it is sufficient to just check all most general instances, as if a refutation is found for any general instance, it can also be found with a more concrete instance.

- We do not spoil future resolutions when applying them.

- It is also necessary for resolution. (You need a literal you can resolve upon when doing resolution and such a literal can be only found by unification).

- Efficiency

- Yes, shown by Robinson and Martelli.

In what sense is Robinson resolution complete and sound?[Bearbeiten | Quelltext bearbeiten]

- It is refutationally sound and complete, i.e.

- if a formula is unsat, then we will be able to resolve to the empty clause and vice versa

Why does one have to speak of refutational completeness? What exactly does this mean?[Bearbeiten | Quelltext bearbeiten]

- https://en.wikipedia.org/wiki/Completeness_(logic)#Refutation-completeness

- Note that in general, binary/robinson resolution is not complete for FOL formulas (satisfiable formulas),

- however, if a formula is unsat, then we will be (always) able to resolve to the empty clause

- And as it is sound, if we derive the empty clause a formula is unsat

Why is binary resolution not complete in general? Can you give an example?[Bearbeiten | Quelltext bearbeiten]

- https://en.wikipedia.org/wiki/Completeness_(logic)#Refutation-completeness

- As in general, FOL is not complete (E.g., SAT)

- Possibly the set , as it is SAT:

What is factorization? Why is it needed?[Bearbeiten | Quelltext bearbeiten]

- A p-reduction on a G-instance of a clause.

- This means a smaller formula resulting in leaving out redundant literals after a renaming of a clause.

- We think it is necessary, as try to apply resolution (without the p-reduct) on the following sets of clauses: and .

What is a semantic tree? How can we use it to prove completeness of resolution refinements and of redundancy elimination mechanisms (e.g., tautology elimination and subsumption)?[Bearbeiten | Quelltext bearbeiten]

- Represents all Herbrand interpretations of a clause set , where each branch induces an assignment of truth values to the herbrand base of .

- Intuitively, a single branch (going from some node up to the root), corresponds to a "partial" interpretation

* A branch fails if some clause fails under this partial interpretation * Then such a branch is finite

- If all branches are finite, then at every branch some node fails, and consequently, each possible assignment leads to a contradiction (and the formula is unsat)

- And finally, if all branches are finite, the tree is finite

- Things that won't fail at any node of the tree can be removed from the set.

- Subsumption:

- E.g., clause {a,b} subsumes {a,b,c}, so we can remove {a,b,c}

- As whenever {a,b,c} fails, also {a,b} fails

- Although the converse is not necessarily true, the step is still sound, as clauses are conjuncted upon (so if {a,b} fails the whole formula is already false)

Why does one have to consider refinements (like A-orderings) in practice?[Bearbeiten | Quelltext bearbeiten]

- This speeds up search.

- Since the number of resolvents grows exponentially, pruning possibilities is helpful.

- (Maybe: Starting with highest nesting depth clauses yields an overall lower nesting depth (Note: needs to be double checked))

Why is quantifier scope minimization significant (in theory and) in practice?[Bearbeiten | Quelltext bearbeiten]

- General in context of definitional clause form (i.e., the tseitin form for FOL)

- Leads to a large speed-up

- Applies quantifier shift rules, i.e., tries to "shift" quantifiers inwards

Intuitionistic Logic/Constructivism[Bearbeiten | Quelltext bearbeiten]

What is the basic difference between constructive and classical reasoning?[Bearbeiten | Quelltext bearbeiten]

- constructive:

- We construct (require) decision procedures for proving something

- I.e., if something is true, we require a decision procedure

- focus on verification, we can only assert truth after

- epistemic (valid = invariantly known)proving/verifying it

- classical:

- every statement is assumed to be either true or false

- ontological (valid = invariantly true)

What is the (effective) disjunction principle? How does it relate to constructive existence?[Bearbeiten | Quelltext bearbeiten]

- We guess that this question is about the law of the excluded middle, which does not hold for intuitionistic logic

- I.e., we can only assert once we have proved either or (found a construction procedure for it)

- A proof of the existence of an object has to inform us on how to construct the object.

- We can translate it to constructive existence.

- Constructive Existence: Given an existential quantification, one needs to find a witness (procedure), which is needed for the proof (more or less translates to the fact that indirect proofs are not allowed in intuitionistic logic)

Brouwer claimed that mathematics is more fundamental than logic. Which consequences/analogues can we formulate computer science? (Explain!)[Bearbeiten | Quelltext bearbeiten]

- When investigating underlying logic one should derive logic from underlying principles and not other way around.

- Consequences for computer science:

- TODO (We are not sure)

- first investigate what should be accepted as a good analysis of a model and then look at what that means for a good underlying logic

- We derive certain logics or logical formalisms for concrete human/scientific needs, e.g., we derive special logics (STRIPS should be one such example) for planning problems, and do not derive the problems from logics

- Algorithms in general are the computer science equivalent to constructive proofs in mathematics.

Why are intuitionistic proofs useful for programming? What is the Curry-Howard correspondence? Which types of programming languages are based on this relation?[Bearbeiten | Quelltext bearbeiten]

- Because an intuitionistic proof delivers a process for constructing an object (i.e. using a program).

- States that the mathematical proofs and computer programs are equivalent. (They represent the same kind of mathematics objects.)

- Curry-Howard correspondence:

- Isomorphism between programs and proofs intuitionistic logic.

- Direct relationship between (mathematical) proofs and programs, i.e., a program can be considered as a proof

- A proof is a program and the formula it proves is the type for the program.

- From every proof in intuitionistic logic, we can derive a program (and vice versa)

- Which types of programming languages are based on this relation?

- Functional languages

What does the Collatz (3n +1 problem) example illustrate?[Bearbeiten | Quelltext bearbeiten]

- Collatz conjecture:

- For even numbers: Divide by 2

- For odd numbers: Multiply by 3 and add 1 (3n+1)

- After some numbers of steps, we always obtain 1

- It is a conjecture which is widely accepted to be true, while no one has found a proof for its truth as of yet.

- Halve even numbers, for odd: triple and then plus one and you will always end up at one for arbitrarily large integers.

- Very easily specifiable program which could not yet be verified, also interesting in terms of incompleteness.

What is the idea of BHK semantics? Explain the meaning of logical connectives and quantifiers according to that interpretation.[Bearbeiten | Quelltext bearbeiten]

- An interpretation in BHK semantics defines how to prove a given formula.

- A connective is defined according to how you prove it.

- Provides semantics for IL.

Explain why tertium non datur is constructively unsound.[Bearbeiten | Quelltext bearbeiten]

- Because the absence of a proof of a formula does not necessitate the existence of a proof for its negation.

Is ex falso quodlibet constructively sound? Why?[Bearbeiten | Quelltext bearbeiten]

- Yes. If we can prove an inconsistency i.e. prove a formula as well as its negation, we can derive anything from this.

- https://math.stackexchange.com/questions/2922457/why-is-the-principle-of-explosion-accepted-in-constructive-mathematics

- If and are both assumed, then holds. Since we have a proof for , for some arbitrary the disjunction holds. From and follows .

- This argument holds in classical and constructive logics.

Can you name a few other classically valid formulas that are not intuitionistically valid?[Bearbeiten | Quelltext bearbeiten]

Modal Logic[Bearbeiten | Quelltext bearbeiten]

What is the basic idea of modal logic? What does ‘modal’ mean here?[Bearbeiten | Quelltext bearbeiten]

- Developed in order to represent necessity and possibility.

- Maybe take a look here: Stanford

- What does ´modal´ mean?

- According to the Stanford Encyclopedia of Philosophy, it says: A modal is an expression (like ‘necessarily’ or ‘possibly’) that is used to qualify the truth of a judgement.

- The mode of assertion (connectives?).

- E.g., an agent knows

- So a modal should refer to one element of the the set of "special" logical connectives, like (or more in multi-modal logics...).

Why are there so many different modal logics?[Bearbeiten | Quelltext bearbeiten]

- Different schools of philosophy/reasoning

- They allow modal logics to be applied in various different concepts, i.e., there are for example:

- temporal logics, which model temporal aspects (e.g., until, etc.)

- Deontic (e.g., obligatory, permitted, forbidden)

- Epistemic (e.g., knows)

- Doxastic

- ...

Can you name a few different possible interpretations of the Box-operator? Can you name different application scenarios in computer science where there interpretations are useful?[Bearbeiten | Quelltext bearbeiten]

- Necessarily, vs. possibly

- Always holds, vs. at some time

- A Knows X, vs. "X deems consistent with what is known to A"

- provable, vs. not not refutable

- holds at every terminating state, vs. holds at some terminating state

Can you derive the informal meaning of Diamond, given the informal meaning of Box? Why and how? (Illustrate with concrete examples)[Bearbeiten | Quelltext bearbeiten]

- Yes, it is .

- Informal: Different interpretations for (possibly) and (necessarily)

- If I do not know that X is false, then it makes sense that I believe (consistent with my knowledge) that X holds.

What is a Kripke frame? What is a Kripke interpretation?[Bearbeiten | Quelltext bearbeiten]

- They are a version of possible world semantics.

- Frame:

- Interpretation:

What four levels of truth can be distinguished in modal logic?[Bearbeiten | Quelltext bearbeiten]

- Truth in a world:

- Truth in a model:

- Truth in a frame:

- is valid in if is true in all interpretations of

- Truth in a class of frames: is true in if - where is a class (set) of frames

How does one specify a specific normal modal logic semantically?[Bearbeiten | Quelltext bearbeiten]

- (Slide 13 modal logics)

- Contains all CL-tautologies (classical logic),

- the K axiom, and

- the necessitation rule

Explain the purely syntactic notion of a ‘logic’. Why is it useful?[Bearbeiten | Quelltext bearbeiten]

- A set of formulas which is:

- closed under substitutions

- closed under modus ponens

- I can compare different axiom schemes, different derivation rules, different Hilbert-type systems arising from either adding or removing certain axioms. So we can compare those logics then in the most basic way, namely in the ways of set inclusion. Is the one contained in the other or are they incomparable sets? That’s what we often want to know about logics.

What is the necessitation rule? Why is it sound?[Bearbeiten | Quelltext bearbeiten]

- Intuitively: If F holds in every interpretation in every world, it must hold for all interpretations, for all worlds, in all connected (outgoing) worlds.

- We can derive from

- TODO - Check the things below:

- can be read like MP, if can be derived so can

- since we are in Hilbert system there are no assumptions, thus you can only derive tautologies

Why is the deduction theorem (in it’s usual form) not valid for usual modal logics?[Bearbeiten | Quelltext bearbeiten]

- We can always derive from , but

- does not hold in general

- Counterexample: interpretation and

Incompleteness[Bearbeiten | Quelltext bearbeiten]

How does Smullyan’s Gödelian puzzle relate to the incompleteness theorem(s)?[Bearbeiten | Quelltext bearbeiten]

- Because the statement “I am not a knight” can neither be shown to be true or false in the given “system”.

- So this is a self-contradicting statement.

Why do we need to use two different signs for natural numbers? What is the difference?[Bearbeiten | Quelltext bearbeiten]

- To differentiate between the domain of the natural numbers and the structure of the natural numbers

- … set of natural numbers

- … structure of natural numbers

What is the difference between incompleteness in the sense of Theorem GT and Gödel-incompleteness? How do these notions relate to syntax and semantics, respectively?[Bearbeiten | Quelltext bearbeiten]

- GT: If is sound and expressible in it, then is incomplete.

- G: If PM (Principia Mathematica) is not -inconsistent and is representable in it, then PM is Gödel-incomplete.

- Difference: Gödel incomplete refers to a system containing formally undecidable sentences. (i.e. a sentence is contained neither in the provable nor refutable sentences of the system).

- GT is about being expressible, i.e., the set is formed by all numbers s.t. its characteristic predicate is true -> semantic notion.

- G is about being representable, i.e., the set is formed by all numbers s.t its characteristic predicate is provable -> syntactic notion.

What is more difficult in general: deciding whether a given formula is true in all possible interpretations of whether it is true in a given concrete interpretation?[Bearbeiten | Quelltext bearbeiten]

- In all possible interpretations, since things get more complicated when they are more concrete.

- Gödel showed:

- Completeness of FOL holds on general level.

- Incompleteness of FOL in arithmetic.

Alternative:

- When we restrict ourselves to the propositional (and finite) case, the answer is evident (concrete is easier than general):

- SAT is in NP (finding a model)

- CHECK is in P (deciding whether a given interpretation is a model).

- This distinction that CHECK is about one level easier than SAT also holds for various fragments of FOL (e.g., ASP))

- (TODO - Please Verify) When we go to FOL the contrary seems to be the case:

- Take as a concrete instance arithmetic: It is a concrete instance of FOL (with some additional axioms). We derive that arithmetic is incomplete in general (i.e., there exists a formula that is valid and can not be proved).

- In contrast to this, for the general case (FOL) there exists a proof for all valid (or unsat) formulas.

- Note the following properties:

- We know that TAUT is semi-decidable, so R.E., but not recursive.

- So SAT is not even semi-decidable (so SAT is not computable in any way).

- I think that CHECK is also not computable for FOL, as I think the complement is semi-decidable (but not recursive).

What is the difference between (arithmetic) definability and (arithmetic) representability?[Bearbeiten | Quelltext bearbeiten]

- Compare: ADRF (All recursive functions and predicates are arithmetically definable) vs. ARRF (all recursive functions are representable in usual arithmetic proof systems)

- I think that the difference is in semantics (ADRF) vs. syntax (ARRF), i.e., ARRF is a somewhat stronger notion of ADRF (due to soundness).

- Preliminaries:

- A defining formula F(v) defines a certain object (e.g., relation/function/number-set). It seems to me that a defining formula for Truth-Arithmetic (TA) corresponds to the abstract notion of expressibility, i.e., semantics.

- In contrast to that, representability seems to be the concrete instance of TA for representability, i.e., syntax (provability).

- Representable definition: f is representable in system if all true instances of its defining formula F are provable in .

- Definability: iff vs. representability: iff for some .

What does the Diagonalization Lemma say? What is the essential proof idea?[Bearbeiten | Quelltext bearbeiten]

- If is expressible in , then there exists a Gödel sentence for A in .

- Proof idea: Assume the expressibility of by H, then unpack definitions.

- Preliminaries:

- S is a Gödel sentence for a number-set A if: .

What is the difference between formal undecidability in Gödel’s sense and undecidability in the sense of computability theory? Can a theory with formally undecidable sentences be decidable? Can a theory , where for each , either or be undecidable?[Bearbeiten | Quelltext bearbeiten]

- What is the difference?

- Formal undecidability - Gödel: sentences which are neither provable nor refutable.

- Undecidability - CT: If there does not exist an algorithm that checks a property

- Can a theory with formally undecidable sentences be decidable?

- Let's assume the following relation to undecidability for a theory: Given a theory, is there a way to check whether a statement is true/false? (See also Wikipedia (Decidability of a theory)

- Then it is evident that it is not decidable, as there is no way for every statement to check this, more or less by definition (as the formula is formally undecidable, there is no procedure which can check for every statement that the statement is true or false)

- Can a theory , where for each , either or be undecidable?

- I think yes, as I think this question relates to TA.

- I.e., for all F either F in TA (true), or not; but according to our corollary on slide 26 TA is undecidable...

What is ‘true arithmetic’()? Why is it well defined? How does it compare with Peano Arithmetic ()?[Bearbeiten | Quelltext bearbeiten]

- What is true arithmetic:

- The set of sentences that are satisfied in model (or PA, as and PA are about the same thing)

- is ordinary FO-Logic plus peano axioms.

- Why is it well defined?

- We define well-defined as: "A well-defined expression is an expression whose definition assigns it a unique interpretation of value." (similar to Wikipedia - Well-defined expression)

- Further, we think that PA is consistent (no sentence is both true and false at the same time).

- Therefore, each true sentence of PA cannot be false at the same time (so is uniquely defined as true).

- From which we can conclude that the set of true sentences is well defined (no element of TA has two values).

- PA vs. TA:

- PA is a system (classical first order theory), which in addition to pure logic has a few non-logical axioms.

- In contrast to this TA is a set (not a system), which contains exactly those sentences which are true in PA.

In what specific sense is arithmetical truth undefinable? Do you see a relation here with the liar paradox? In which sense is undefinability of truth different from (Gödel-)incompleteness?[Bearbeiten | Quelltext bearbeiten]

- In what specific sense is arithmetical truth undefinable?

- Intuitive idea behind Tarski: No sound and reasonably expressive system of arithmetic can define the set of (arithmetically) true sentences.

- In the sense of Tarski's Undefinability Theorem, which asserts that the set is not definable in Formal Arithmetic.

- But note that TA is the set of true arithmetic (TA), therefore we CANNOT DEFINE TA.

- Also look here: Wikipedia

- Do you see a relation here with the liar paradox?

- https://en.wikipedia.org/wiki/G%C3%B6del%27s_incompleteness_theorems#Relationship_with_the_liar_paradox

- The liar paradox states: "This sentence is a lie."

- This seems to correspond to the proof of the Theorem T, to be more concrete with the proof of the Gödel Sentence of cannot exist (as is true iff it is in the set of false sentences (i.e., it is true iff it is false))

- (Maybe also): Assume we could define TA. Then we could define the liars paradox (as by G1 (slide 11) we could also define ). But then we would solve the liars paradox, as it would be either true, or false (but it is neither...).

- In which sense is the undefinability of truth different from (Gödel-)incompleteness?

- If we refer to arithmetic definability specifically, then we have that a number set is arithmetically definable/expressible if for a given formula .

- Therefore, (arithmetic) undefinability of a number set refers to the impossibility of finding a formula that expresses , while (Gödel)-incompleteness refers to the impossibility of establishing the membership of every formula to either or .

- Should be: Difference between syntax (Gödel's incompleteness) and semantics (undefinability), i.e., we have something that is neither true nor false, and something that is neither provable, nor refutable.

What is the ADRF Theorem? What is the proof idea?[Bearbeiten | Quelltext bearbeiten]

- It states that all recursive functions are arithmetically definable.

- Church-Turing thesis: all computable functions are arithmetically definable

- We apply induction on the possible ways of function can be built.

- Base: projection, constant-0 and successor

- Inductive cases: composition, primitive recursion, minimisation. Show witness formula for definability.

- Predicates can be coded as characteristic functions

How does one arithmetically define a function / a predicate / a number set?[Bearbeiten | Quelltext bearbeiten]

- function: n+1-ary predicate with output as last parameter

- predicate: characteristic function

- number set: formula takes free variable and is true iff corresponding number is in set

What is Gödel’s second incompleteness theorem? Why was it considered to be even more important than his first incompleteness theorem in 1931?[Bearbeiten | Quelltext bearbeiten]

- If the provability predicate for a proof system is representable in and satisfies the provability conditions then is only provable if is inconsistent.

- provability conditions: (using provability predicate describing provability)

- then

- provability conditions: (using provability predicate describing provability)

- Because it showed that the Hilbert problem of finding a proof of consistency using an elementary system (not stronger than arithmetic) could not be positively solved (from inside the system alone).

[Bearbeiten | Quelltext bearbeiten]

- Incompleteness was shown first (1931 by Gödel; that's what this lecture is about...)

- Undecidability was shown in 1936 (Alonzo Church - Some problem in lambda calculus)/1936 (Alan Turin - Halting Problem)

- Incompleteness to Undecidability:

- Hard direction (or maybe impossible to show it).

- But we have a nice corollary, which is related here:

- From the incompleteness theorem we get undecidability of as a corollary (slide 26). This is as TA is not recursively axiomatizable.

- Undecidability to incompleteness:

- Assume we have a sound and complete formalism.

- And then (i) take Halting, (ii) put it into this formalism and (iii) assume we can express/define Halting in our formalism.

- As our system is sound and complete we can either prove or refute Halting (which is not possible, i.e., Halting is an instance of something that can neither be proved nor refuted)

What is the significance of incompleteness for software verification?[Bearbeiten | Quelltext bearbeiten]

- From incompleteness we know that there cannot be a proof system that allows verification of all programs in a reasonably rich language.

- If you fix a proof system for establishing program correctness, then there are cases that cannot be verified by the system.

- (Cpy-paste from some other summary which sounds intelligent): Even if everything is decidable, if our calculus is “expressive enough”, it will be incomplete, meaning that there will always be true assertions (such as preconditions, postconditions and invariants) that are not provable in the calculus. Incompleteness gives us a limit to what can be done in software verification. It can be shown that there cannot exist a system that given arbitrary precondition, an arbitrary postcondition, and a program, answers if the program satisfies these or not.

- I further think that it is important to state that neither total nor partial correctness can be proved in general.